Hackers figured out how to make Tesla’s Autopilot steer into oncoming traffic

A group of Chinese hackers say that they can trick a Tesla on Autopilot into steering into oncoming traffic with just three stickers.

Hackers with the Tencent Keen Security Lab recently released a report testing how easy it was to dupe a Tesla Model S’s Autopilot system into driving into danger, according to reporting from Forbes.

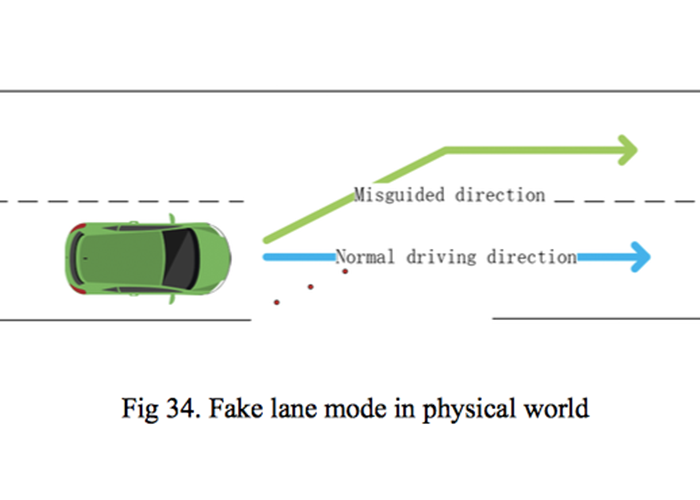

Though the group tried numerous tactics to try to gain control of the Tesla, one of the most alarming findings from the Keen Security Lab report was that researchers were able to steer a Tesla off course using just three stickers placed on the roadway, creating a “fake lane.”

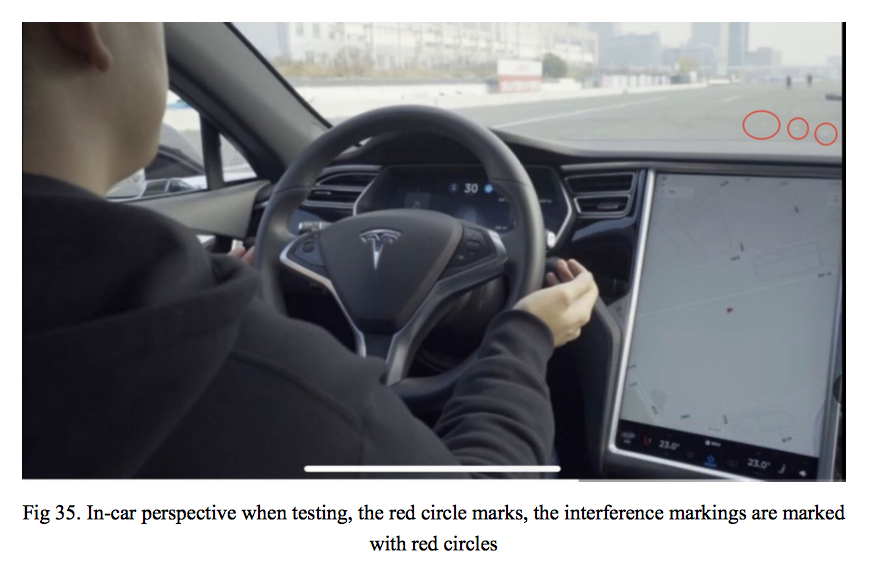

Researchers set up an experiment and carefully placed three stickers that were nearly invisible to a human driver, but the Tesla’s Autopilot technology read the stickers to indicate that the lane was shifting left even though it wasn’t. The Tesla moved left into what cold have been oncoming traffic in a real-world situation.

The group wrote:

Tesla uses a pure computer vision solution for lane recognition, and we found in this attack experiment that the vehicle driving decision is only based on computer vision lane recognition results. Our experiments proved that this architecture has security risks and reverse lane recognition is one of the necessary functions for autonomous driving in non-closed roads. In the scene we build, if the vehicle knows that the fake lane is pointing to the reverse lane, it should ignore this fake lane and then it could avoid a traffic accident.

Researchers were able to also able to trick the Tesla into believing that it was raining, causing the windshield wipers to activate.

Tesla responded to the report by saying that they had already fixed the problem:

While we always appreciate this group’s work, the primary vulnerability addressed in this report was fixed by Tesla through a robust security update in 2017, followed by another comprehensive security update in 2018, both of which we released before this group reported this research to us.